You Can't Build an AI Solution If You Haven't Diagnosed the Problem

Key Takeaways:

- The Shift: Enterprise AI is moving from broad LLMs (Large Language Models) to specialized, domain-specific applications.

- The Strategy: Success requires a focus on data sovereignty, infrastructure scalability, and ROI-driven use cases.

- The Goal: Transitioning GenAI from a "shiny object" to a core utility that drives measurable business outcomes.

Artificial Intelligence (AI) is universally heralded as a foundational technology capable of redefining enterprise productivity and competitive differentiation. Gartner Says Worldwide AI Spending Will Total $1.5 Trillion in 2025. While organizations have deployed capital at an unprecedented scale, the transition from experimental curiosity to operational backbone has revealed a fundamental inability for most corporates to innovate.

This crisis is not a byproduct of insufficient computational power or the limitations of large language models; rather, it’s the result of a profound diagnostic failure. 2026 studies suggest that the vast majority of AI initiatives are doomed before the first line of code is written because organizations have failed to accurately diagnose the problems they intend to solve.

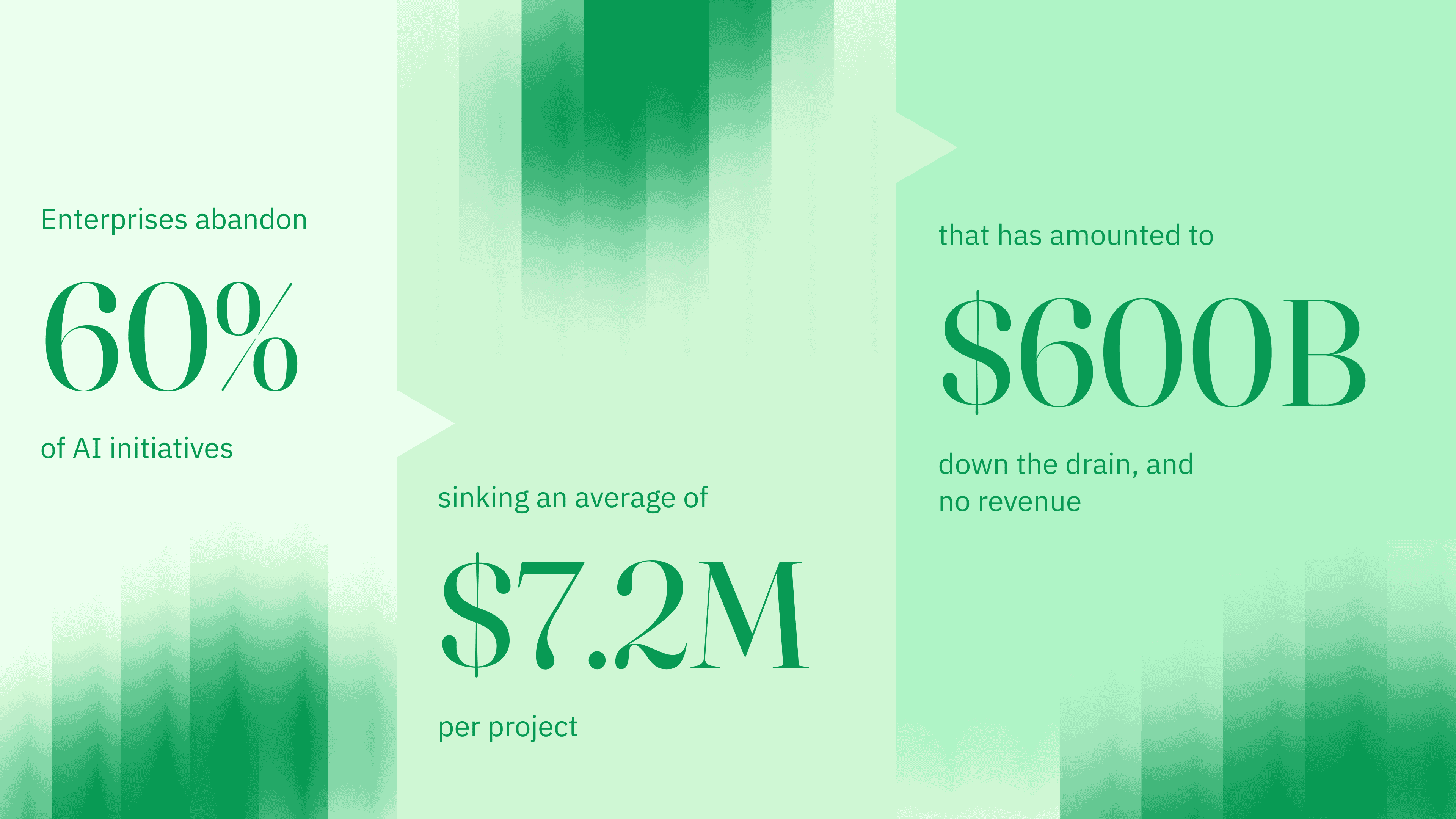

Over Half of Enterprises Are Abandoning Multi-Million Dollar AI Initiatives

Research from MIT's Project NANDA, published in July 2025, provided the most sobering assessment of the era: 95% of organizations deploying generative AI reported zero measurable return on their investment. This figure represents more than a mere lack of profitability; it signifies a total absence of sustained productivity gains or documented impact on enterprise EBIT.

As enterprises enter their first serious budget review cycles in 2026 for years-old pilots, programs are being canceled at an accelerating rate.

| Economic Indicator of AI Implementation (2025-2026) | Value/Statistic |

|---|---|

| Projected enterprise abandonment rate for data-unready projects | 60% |

| Average sunk cost of an abandoned AI initiative | $7.2M |

| Total ROI gap (Capital Deployed vs. Revenue Generated) | $600B |

The failure to diagnose the problem manifests as a "slow leak" rather than a dramatic crash. Projects frequently lose momentum as they move toward production, with costs escalating far beyond initial projections while expectations remain unmet. This attrition is driven by the realization that many initiatives were technology-led rather than outcome-led, resulting in "brochureware" AI that automates tasks in isolation without improving business outcomes.

The Primary Cause of AI Failure? A Solution in Search of a Problem

The most fundamental issue is the misidentification of the core business problem. This failure occurs at the intersection of leadership vision and technical execution. Business leaders often articulate desired outcomes in vague or aspirational terms which technical teams are then forced to interpret without a concrete operational map.

This misalignment is pervasive. In late 2024, Gallup research found that only 15% of U.S. employees felt their workplace had communicated a clear AI strategy. When strategy is absent at the workforce level, the definition of specific problems at the project level is almost certainly degraded. Organizations that succeed in capturing meaningful ROI are twice as likely to have redesigned their end-to-end workflows before ever selecting a modeling technique or vendor.

A recurring root cause identified in 2025 and 2026 is the "technology-first" sequence, where companies select a tool or platform before they have established the necessary conditions for its success. This is often driven by a desire to keep pace with competitors rather than a diagnostic assessment of internal bottlenecks. When teams start with the technology, they often end up with a solution in search of a problem, leading to the creation of "dashboard junk" or AI features that are technically sound but operationally irrelevant.

The diagnostic failure is compounded by a profound leadership gap. Approximately 63% of data leaders admit they lack the foundational data practices required for AI success. This vacuum in middle management and leadership tiers often means that no one has the authority or expertise to say "no" to a flawed AI project before significant capital is sunk into it.

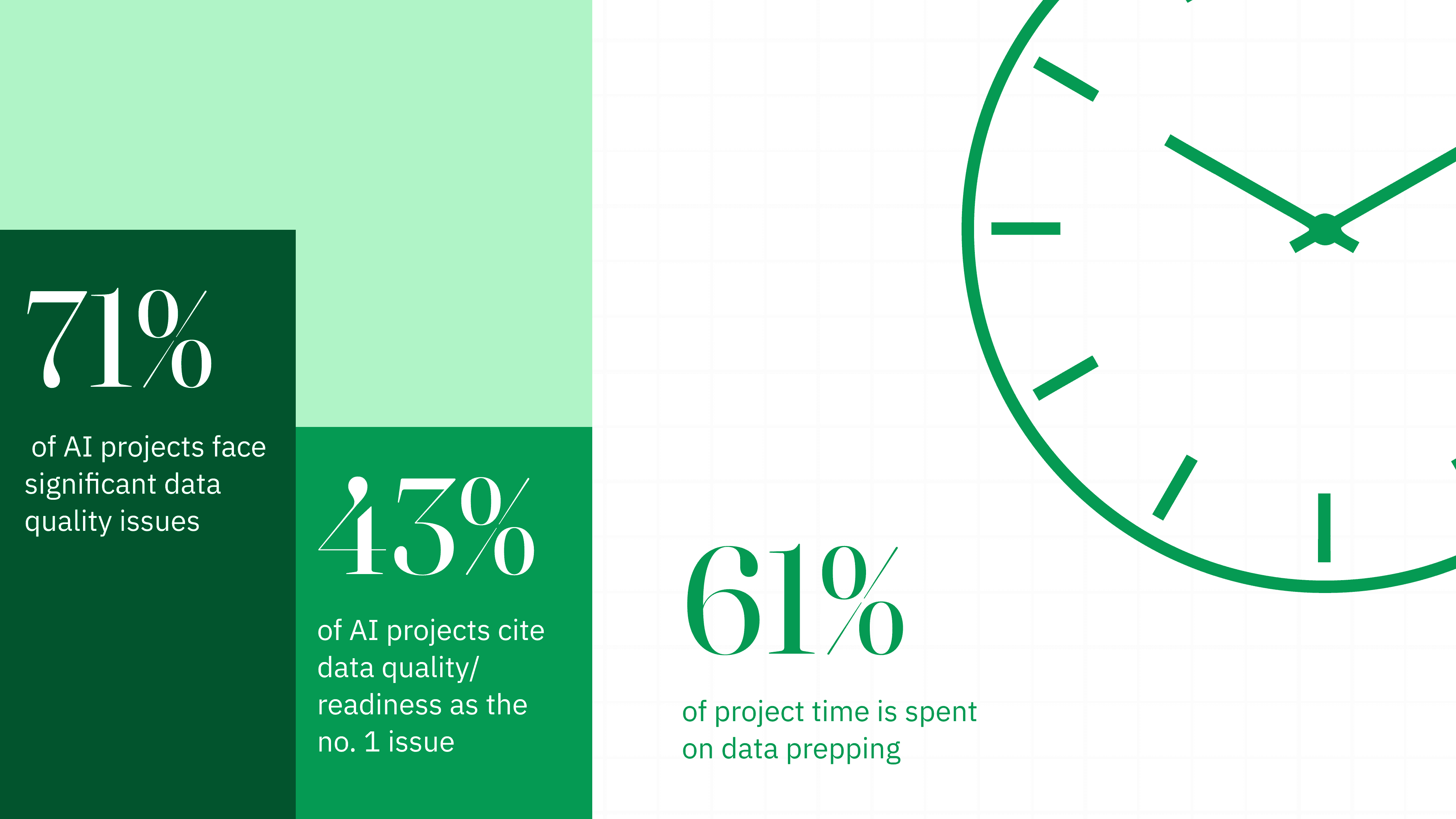

AI's Biggest Challenge: Data Readiness, Not Data Quality

One of the most frequent excuses for AI failure is "poor data quality," but in 2026, analysts have refined this diagnosis. The issue is not necessarily a lack of data, but a lack of "AI-ready" data. Organizations have historically managed data for human consumption or traditional business intelligence, but AI models require data that is structured for machine inference, semantically grounded, and contextually resilient.

Gartner research indicates that 60% of AI projects will be abandoned through 2026 specifically because they lack this foundational AI readiness. The gap between traditional data management and AI readiness is often discovered too late; on average, organizations identify insurmountable data quality issues 5.2 months into a project, well after significant investment has occurred.

In 2026, the industry has realized that AI readiness is essentially a metadata problem. AI-ready data is defined by its ability to have its fitness for a specific use case proven contextually and continuously. This requires active metadata management, a practice that only a fraction of enterprises currently maintain. Without this "unified context layer," AI agents operate on fragmented information, leading to hallucinations and unreliable outputs.

| Data Readiness Obstacle | Percentage of Respondents/Projects |

|---|---|

| Data Quality/Readiness as Number One Obstacle | 43% |

| Projects with Significant Data Quality Issues | 71% |

| Average Time Spent on Data Preparation | 61% of Timeline |

The diagnostic failure regarding data is often rooted in "data debt" that compounds silently over time. Outdated, duplicate records across systems and missing metadata are often overlooked during the pilot phase because demos run on small, curated datasets. When these systems are introduced to the messy, inconsistent reality of production-grade enterprise data, they inevitably falter.

Governance is the Culprit for Failed AI Projects

In 2026, the focus of AI implementation has shifted from chatbots to "agentic AI" autonomous systems capable of planning, reasoning, and executing multi-step tasks without continuous human input. While these systems offer higher potential for productivity gains, they also introduce a new set of diagnostic failures.

By 2027, Gartner predicts that more than 40% of agentic AI projects still will be abandoned. The failures are predictable but severe: runaway costs due to continuous API calls, agents that behave in ways that violate organizational policy, and the lack of a clear return on investment. The diagnostic error here is often a failure of "governance" rather than technology. Only one in five companies has a mature model for the governance of autonomous AI agents.

A 2026 McKinsey survey on AI Trust Maturity revealed that security concerns are now the top barrier to scaling agentic AI. As adoption expands, 74% of organizations identify inaccuracy and 72% cite cybersecurity as highly relevant risks. The diagnostic failure is the tendency to treat AI security as a secondary concern rather than a foundational requirement. This was illustrated in March 2026 when a security firm successfully compromised a major internal AI platform in under two hours, accessing millions of confidential records.

Most organizations fail to diagnose the differences between a demo environment and a production system. A working demo requires only technical feasibility; a production system requires data governance, security, workflow integration, and user trust.

Audits Set You on the Right AI Course

Before a single line of code is written, aion’s AI audit uses a fine-toothed diagnostic to evaluate an organization’s AI maturity across five critical dimensions: Data Infrastructure, ML Operations, Governance & Ethics, Team Capability, and Strategy Alignment. This eliminates the most common origin of failure, building for an organization that lacks the foundational "maturity" to sustain the system.

The audit provides a clear roadmap by:

- Industry Benchmarking: Measuring readiness against peers to identify competitive gaps.

- High-Impact Use Case Identification: Pinpointing the 2-3 specific business problems where AI can move the needle fastest with estimated ROI and timelines.

- Data Readiness Review: Validating whether existing data assets are structured for machine inference before beginning development, thus preventing the "5.2-month discovery gap" where quality issues usually derail projects.

The 14-Day Bootcamp

To kill "pilot purgatory" where 46% of proofs-of-concept are scrapped before production, aion utilizes a forward-deployed engineering model. Instead of traditional consultants, researchers and engineers embed directly with an organization for two intensive weeks to build a production-grade AI system, not a prototype.

This bootcamp approach addresses the structural "slow leak" of AI projects by:

- Focusing on Real Business Problems: Every sprint is scoped to a specific decision or workflow identified during the audit.

- Utilizing the Nexus Platform: Building on aion’s proprietary software layer ensures that monitoring, evaluation, and governance are "baked in" from day one, rather than "bolted on" at the end of a failed pilot.

- Proving Utility in Days: By shipping a working system that runs on production data within 14 days, the bootcamp replaces "gut-feel experimentation" with verifiable evidence of success.

This methodology ensures that regulated industries can move fast without moving recklessly, turning AI from a speculative experiment into a strategic infrastructure component.