8 mins read

aion's 4D Playbook: A Systematic Way to Get AI Into Production

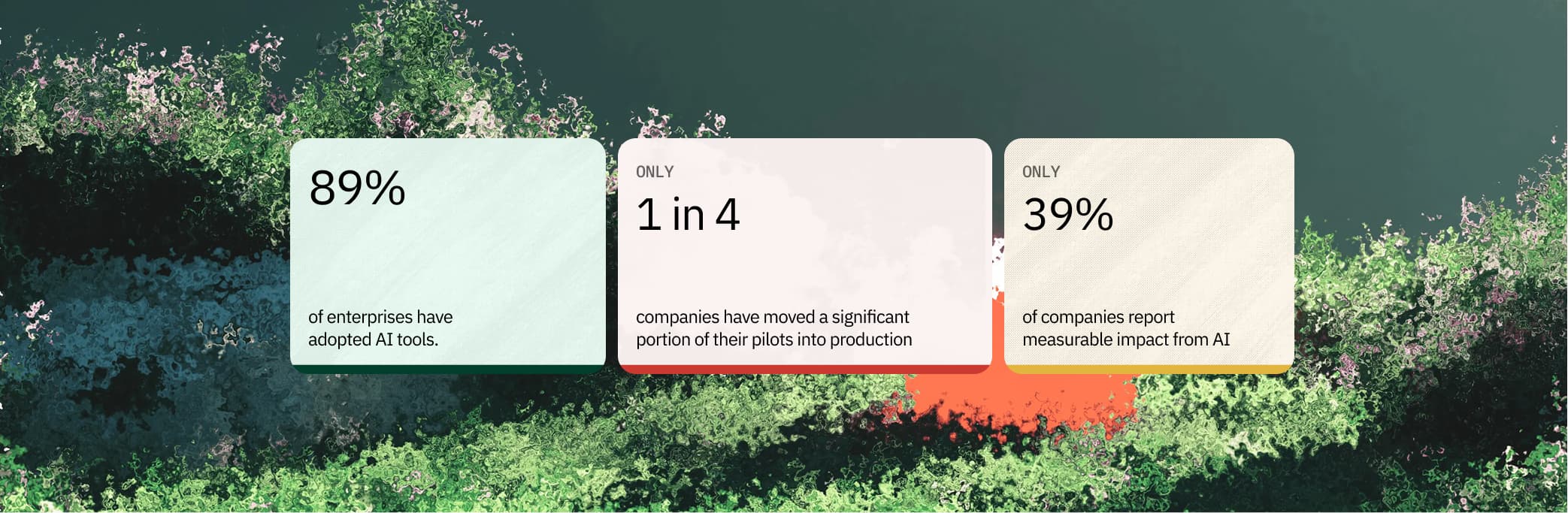

95% of enterprise generative AI pilots produce zero P&L impact. aion's 4D Playbook — Diagnosis, Days, Deployment, Drive — replaces bespoke project thinking with a sequenced operating system for getting AI into production.