Your AI Strategy Is Failing Because of Your Team, Not Your Tech

Key Takeaways:

- The Problem: Enterprise AI spending is doubling, yet 74% of organizations fail to capture meaningful value from it.

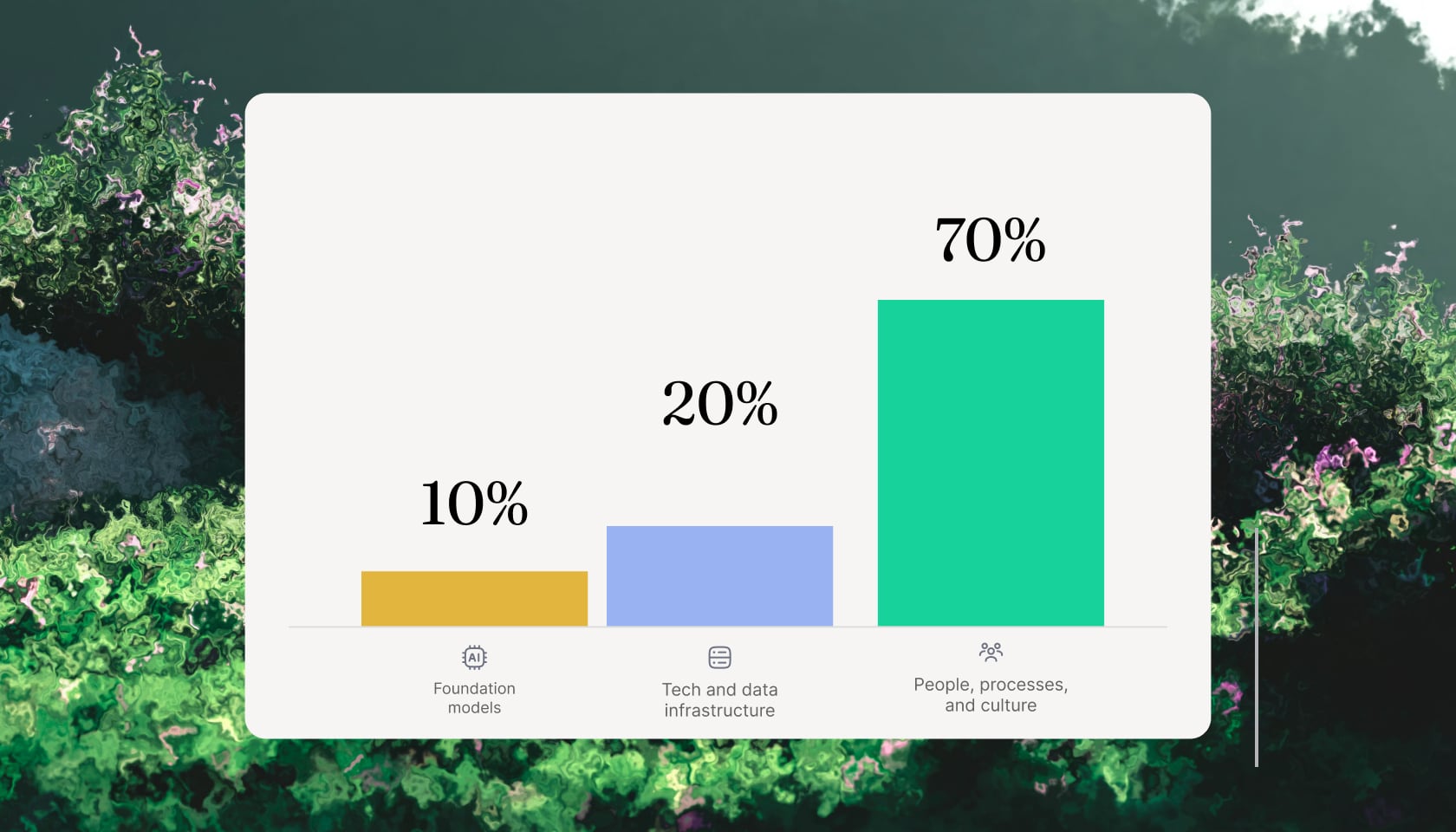

- Resource Misallocation: Most companies obsess over foundation models (10%) and infrastructure (20%) while neglecting the 70% that actually drives ROI: people, processes, and culture.

- The Proof: Morgan Stanley's embedded forward-deployed engineer (FDE) deployment hit 98% advisor adoption and 20–50% efficiency gains by building natively into existing workflows.

- The Recommendation: Treat AI as an organizational transformation, not a technology purchase, and partner with teams who own outcomes, not deliverables.

Today's enterprise landscape is traversing a profound and turbulent technological inflection point: transitioning rapidly from an era of exploratory artificial intelligence (AI) experimentation into a phase of demanding, scalable capability. Organizations across all sectors are aggressively escalating their financial and strategic commitments to advanced computational systems.

Empirical data indicates that enterprise artificial intelligence spending is projected to double, reaching an unprecedented 1.7% of total corporate revenues in the near term. Meanwhile, 64% of surveyed organizations intend to significantly increase AI investments over the next two years, with technology budgets allocated to AI rising from 8% to 13%.

Yet the majority of these initiatives fail to deliver scalable or measurable business value. BCG research shows that up to 74% of organizations struggle to achieve tangible value from their AI deployments. Integrating AI, particularly agentic systems capable of autonomous action, is not like installing traditional SaaS applications. It constitutes a fundamental reconfiguration of enterprise workflows, decision-making, and human capital deployment.

In this post, we examine the foundational principles governing technology adoption to understand why these strategies falter so predictably, and how elite organizations circumvent these failures — chief among them, the 10-20-70 rule. Through this framework, we uncover the need for a specialized role designed to bridge the gap between raw technological capability and enterprise reality: the Forward Deployed Engineer (FDE).

The 10-20-70 Rule Spells Out Your AI Solution

Top-performing organizations that successfully scale more than twice as many artificial intelligence products with more than 2x the ROI as their less mature peers operate under a fundamentally divergent, human-centric paradigm. This paradigm is codified in the 10-20-70 rule, a strategic delivery framework pioneered and validated by leading global advisories including the Boston Consulting Group (BCG) and McKinsey & Company.

The 10-20-70 principle provides a precise, empirically backed heuristic for the allocation of enterprise budgets, cognitive engineering effort, and strategic leadership focus required to successfully execute an artificial intelligence transformation. The framework says: sustainable AI value creation scales in direct proportion to a highly counterintuitive distribution of resources.

The Surprising 10 Percent: Foundation Models

According to the framework, a mere 10% of an organization's effort and resources should be devoted to the artificial intelligence algorithms and the foundational models themselves. Paradoxically, this ten percent represents the epicenter of media attention, vendor marketing hyperbole, and initial executive focus. Boardrooms frequently obsess over parameter counts, benchmark scores, and the comparative intelligence of competing foundational models. However, BCG research consistently validates that the raw intelligence of a model is the absolute least differentiated value for the enterprise if it remains disconnected from specific business realities.

The Meatier 20 Percent: Technology and Data Infrastructure

The subsequent 20% of the strategic effort encompasses the overarching technology stack, the data infrastructure, the application programming interfaces (APIs), the visualization platforms, and the integration tooling. This layer functions as the digital circulatory system for the artificial intelligence, enabling the routing of information and the execution of basic logic. In the context of strategic workforce planning, for example, this twenty percent involves ensuring dependable job and skills data architectures, establishing sound demand forecasting assumptions, creating seamless integration with financial planning systems, and documenting clear data access protocols.

The Core 70 Percent: People, Processes, and Cultural Transformation

The overwhelming majority of the transformation effort, the remaining 70%, must be ruthlessly dedicated to the human factors that sit beneath the visible surface of technology deployment. This vast undertaking includes redesigning how work gets executed on a daily basis, redefining individual job roles and descriptions, and executing comprehensive organizational change management. Leadership must systematically upskill technical and non-technical teams and foster an environment of seamless collaboration between human employees and agentic systems.

Understanding the Context Gap

So why is the seventy percent so difficult to execute? And why is the twenty percent infrastructure layer frequently collapsing? The enterprise software ecosystem is currently littered with highly successful proofs of concept (POCs) that completely disintegrate upon exposure to live production environments.

The primary catalyst for this massive attrition rate is rarely a failure of the foundational model itself; rather, it is the existence of an insurmountable "context gap." The context gap represents the massive semantic, structural, and operational void between an AI agent's performance on highly curated, static test datasets, and its performance when subjected to the chaotic, undocumented, and deeply siloed reality of live enterprise data.

The Genesis of the Forward Deployed Engineer (FDE)

The persistent, systemic failure of traditional software engineering models to bridge the context gap and operationalize the seventy percent human factor has necessitated the creation and widespread adoption of a specialized, hybrid engineering archetype: the Forward Deployed Engineer (FDE).

The concept and creation of Forward Deployed Engineering was pioneered and formalized by Palantir Technologies in the early 2000s, forged in the canon of the global intelligence community.

Palantir recognized a fundamental truth about enterprise data integration within highly complex, resistant institutions: these agencies did not lack technology, nor did they require a better algorithm or a cleaner hypothetical data model. What they desperately required was a new kind of engineering personnel capable of bridging the immense chasm between abstract software platform capabilities and mission-critical, profoundly messy operational realities.

While the FDE model originated in the realm of complex data integration, the advent of Generative AI, LLMs, and autonomous agentic systems has made the role exponentially more vital. Companies operating at the absolute frontier of AI, such as OpenAI and Anthropic, have aggressively scaled their internal FDE functions to combat the 70% failure rate of enterprise artificial intelligence.

For frontier AI companies, the FDE serves as the ultimate technical diplomat bridging the gap between esoteric research breakthroughs and tangible, production-grade business value. The AI FDE focuses strictly on enterprise utility.

Real Examples of High-Value Deployments

The theoretical alignment of the 10-20-70 rule and FDE execution is overwhelmingly validated by empirical case studies across diverse, highly regulated global industries. Organizations that leverage FDEs to deeply integrate artificial intelligence into their operational workflows, bypassing the superficial wrapper approach, consistently report massive adoption rates and significant returns on investment.

Morgan Stanley: Institutionalizing Financial Knowledge

Morgan Stanley serves as a premier historical case study for successful enterprise AI deployment, having begun its journey with Generative AI in 2022, long before the mainstream hype cycle. Partnering deeply with OpenAI's FDE team led by Colin Jarvis, Morgan Stanley avoided the trap of building simplistic chat interfaces, focusing instead on solving high-value problems within their wealth management division.

The OpenAI FDEs deeply embedded themselves within the institution to comprehend the highly specialized, highly regulated domain of financial advisory. Over an extended period of several months, they iterated alongside the actual human users, building sophisticated evaluation frameworks and rigid compliance guardrails natively into the architecture. The result was the deployment of a highly accurate AI knowledge assistant that drastically streamlined the retrieval of complex financial data, enabling advisors to deliver significantly faster and more accurate counsel.

By obsessively focusing on the seventy percent, ensuring the tool natively fit the advisor's existing, high-pressure daily workflow, the deployment achieved an unprecedented 98% adoption rate among Morgan Stanley advisors. This generated substantial operational efficiency improvements ranging from 20% to 50%, fundamentally altering resource allocation and allowing the firm to redirect human capital toward higher-value client relationship management and business development.

aion Moves from Delivery to Co-Creation

Instead of building a system and handing it off, we run a real AI audit that diagnoses bottlenecks and identifies the top two to three use cases for maximum ROI. In a 14-day bootcamp, we then embed our engineers directly into engineering teams, working with real data and real constraints. The market has rapidly awakened to this necessity: job postings for forward-deployed engineers surged by over 800% between January and September 2025 alone. The prevailing lesson is clear: AI deployment is no longer viewed as a technology delivery problem; it's a co-creation problem.

Instead of working in silos, aion's forward-deployed engineers (FDEs) work inside your environment — not from a separate office, and not through a ticketing system. They build alongside your team, own outcomes with you, and stay until the system runs smoothly without them.

Crucially, unlike pure embedded engineering models, our FDEs don't just build; they transfer knowledge. Every user correction, every edge case, and every production signal feeds back into the system automatically through our Nexus platform. Your AI doesn't just run; it gets better the longer it runs. By the time our engineers step back, your team understands exactly how the system works, how to maintain it, and how to extend it.

aion's AI Research Advantage

In addition to our Forward-Deployed Engineers, aion utilizes an in-house research team building custom models, fine-tuning foundation models, and continuously optimizing performance. This capability is anchored by four research pillars: custom model development, production-grade evaluation, domain-specific fine-tuning, and self-improving systems. This guarantees that the AI we deploy isn't generic. It is purpose-built for each client's specific domain, data, and operational context.

AI is not a technology transformation. It's an organizational one. If your plan is mostly technical, you are optimizing the wrong 30%. Forward-deployed engineers and dedicated researchers supercharge your team, ensuring that your entire organization is ready to finally take advantage of all the increasingly powerful models in production. Our FDEs and researchers want to chat.